A proposed class-action lawsuit filed in California on April 1, 2026 — first reported by Bloomberg — alleges that Perplexity AI embedded undisclosed tracking technology that transmitted user data, including sensitive search queries, to Meta and Google via server-side mechanisms that bypassed incognito/private browsing mode.

The lawsuit alleges violations of the California Consumer Privacy Act (CCPA) and California’s Electronic Communications Privacy Act (CalECPA), and seeks class-action status representing affected California users.

For compliance officers, privacy lawyers, and risk managers, this case raises questions that extend well beyond Perplexity:

- When does server-side analytics become an unauthorized “sale” or “sharing” under CCPA?

- What vendor due diligence obligations apply to AI tools your employees are using?

- What are the disclosure requirements for AI platforms collecting sensitive query data?

- How does CalECPA apply to AI search and assistant tools?

This analysis examines each of these questions and provides a compliance framework for organizations navigating AI tool use in 2026.

The Allegations: A CCPA Primer

To understand the compliance implications, it’s worth being precise about what’s alleged and how it maps to specific statutory provisions.

What the Lawsuit Alleges

The complaint alleges that Perplexity AI:

-

Embedded undisclosed tracking technology — Trackers were allegedly “undetectable” through normal means, meaning they were not client-side JavaScript visible to browser tools, but server-side transmission to third-party platforms.

-

Transmitted user data to Meta and Google — Via server-to-server APIs (such as Meta’s Conversions API and Google’s Measurement Protocol), user data including search queries was allegedly sent to these platforms without user knowledge or consent.

-

Did not disclose this practice adequately — The alleged tracking was not clearly disclosed in Perplexity’s privacy policy in a manner that satisfied CCPA’s transparency requirements.

-

Continued tracking in private/incognito browsing sessions — Because the tracking was server-side, users who reasonably believed incognito mode provided protection were not protected.

-

Collected and transmitted sensitive personal information — AI search queries frequently contain personal health information, financial details, and other sensitive data protected under CCPA’s heightened sensitive personal information provisions.

How CCPA Maps to These Allegations

Section 1798.100 (Right to Know): CCPA requires businesses to inform consumers at the time of collection about the categories of personal information collected and the purposes for which it will be used. If Perplexity was transmitting query data to Meta and Google without disclosing this practice, it potentially violated the disclosure obligations at the point of data collection.

Section 1798.120 (Right to Opt Out of Sale/Sharing): CCPA distinguishes between “sale” (exchange for monetary consideration) and “sharing” (disclosure for cross-context behavioral advertising). Transmitting user data to advertising platforms like Meta and Google for advertising purposes could constitute “sharing” under CCPA even if Perplexity received no direct payment for the individual transmissions. CCPA requires that users have a clear and conspicuous “Do Not Sell or Share My Personal Information” opt-out link.

Section 1798.121 (Sensitive Personal Information): CCPA created a special category for sensitive personal information, which includes “personal information collected and analyzed concerning a consumer’s health” and data about a consumer’s “precise geolocation,” “financial information,” and “personal communications.” AI search queries routinely contain this type of sensitive information. The CCPA limits the use of sensitive personal information to what’s necessary to provide the requested service — using health-related queries for advertising transmission could violate this provision.

Section 1798.150 (Private Right of Action): The CCPA’s private right of action is triggered when a business fails to implement reasonable security practices and a breach exposes certain categories of personal information. Damages range from $100 to $750 per consumer per incident, or actual damages if greater. For a class action with thousands of class members, this creates substantial potential liability.

The CalECPA Dimension

The California Electronic Communications Privacy Act (CalECPA), enacted in 2015 and strengthened in subsequent years, governs the interception and disclosure of electronic communications. Its application to AI search is potentially more significant than CCPA in one respect: it governs the interception of communications in transit, not just the storage and use of personal information.

CalECPA Section 1546: Makes it a violation for any entity to intercept electronic communications without authorization. “Electronic communications” is broadly defined and includes data transmitted over networks.

The relevant legal question: When a user types a query into Perplexity and that query is simultaneously transmitted to Meta’s servers without the user’s knowledge or consent, does that constitute “interception” of an electronic communication? The argument is that the communication (the query) was intended for Perplexity, and the transmission to Meta was an unauthorized interception.

CalECPA carries both civil and criminal penalties, and its private right of action is broader than CCPA’s. This is likely why it’s included in the complaint alongside CCPA.

Precedent concern: If a court accepts this theory — that server-side transmission of AI queries to advertising platforms constitutes CalECPA interception — it could dramatically expand liability for AI tools and web services that use server-side analytics without clear user disclosure.

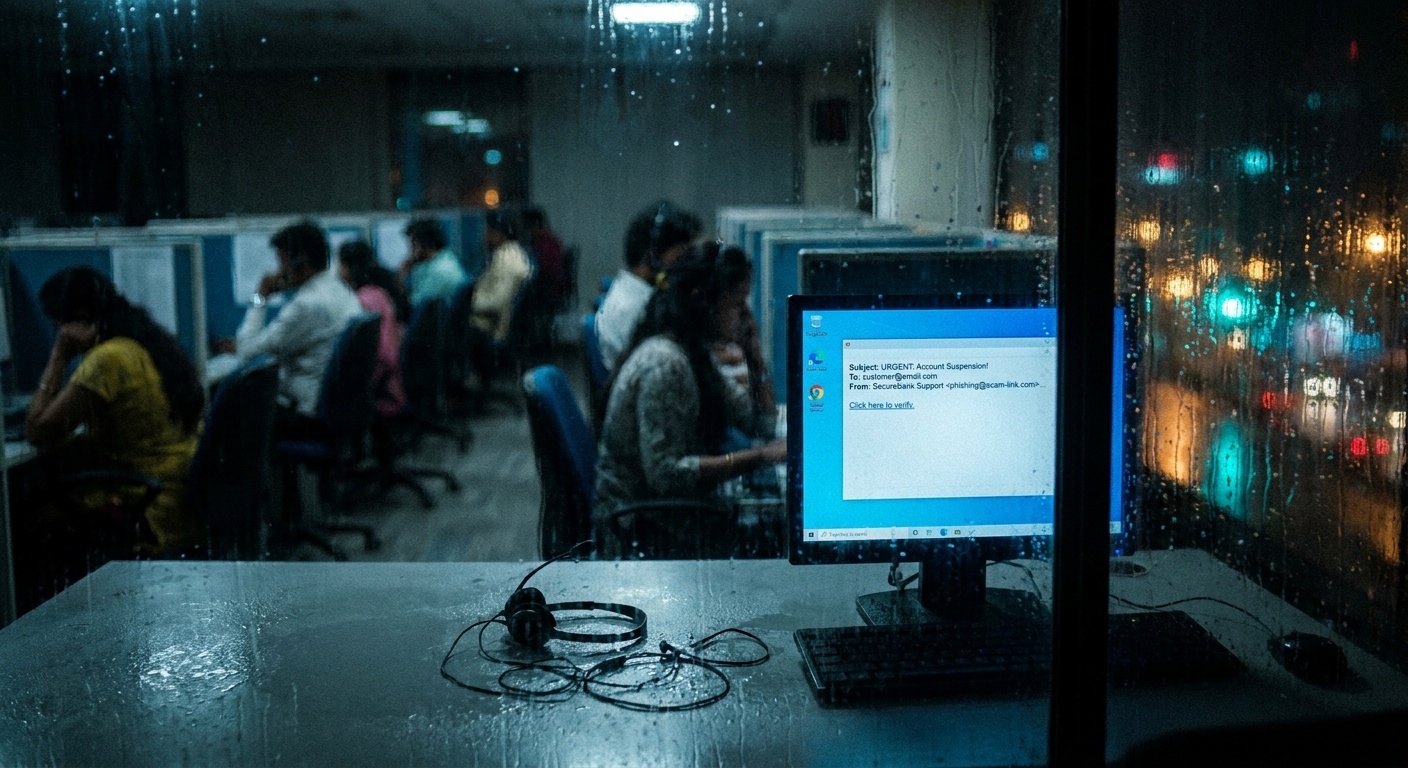

Server-Side Tracking: The Compliance Blind Spot

The technical mechanism at the heart of this lawsuit — server-side tracking — is a compliance blind spot for many privacy programs because traditional privacy compliance frameworks were built around client-side tracking.

How Server-Side Tracking Works (and Why Traditional Controls Miss It)

Traditional privacy compliance focused on:

- Cookie consent banners (blocking client-side JavaScript)

- Browser-based tracking disclosures

- Analytics vendor disclosures in privacy policies

Server-side tracking bypasses all of these controls:

When Perplexity receives a user query on its servers, it can make server-to-server API calls to Meta’s Conversions API or Google Analytics 4’s Measurement Protocol. These calls are made from Perplexity’s servers to Meta’s/Google’s servers. Your browser is not involved. Your cookie consent preference is irrelevant. Your incognito mode has no effect.

For compliance programs, this means:

-

Your vendor privacy assessments need to specifically ask about server-side analytics — A vendor answering “yes” to “do you have a GDPR-compliant cookie policy” may still be running server-side tracking that’s completely outside the cookie framework.

-

Privacy policy review needs to examine data flow diagrams, not just policy text — A privacy policy that doesn’t explicitly disclose server-side data sharing may still be technically accurate but functionally misleading.

-

Third-party sub-processor lists need to include server-side analytics vendors — Meta and Google’s server-side tracking APIs are functionally sub-processors of any company that uses them, but they often don’t appear in vendor inventories because the integration is entirely server-side.

The Vendor Due Diligence Implication

If your organization is using AI tools that process sensitive employee or customer data, you have an independent compliance obligation to understand those tools’ data flows — including server-side tracking.

Questions your vendor due diligence should now include:

- “Do you use server-side analytics or conversion tracking APIs that transmit user query data to third parties?”

- “Which specific third parties receive user data via server-to-server integrations?”

- “Do these transmissions occur for users in private/incognito browsing sessions?”

- “Do you have documented data processing agreements (DPAs) with all third-party recipients of user data?”

- “Can users opt out of third-party data sharing at the time of their query?”

If a vendor can’t answer these questions clearly, that’s a material gap in their privacy program — and potentially a gap in your CCPA and GDPR compliance programs if you’re directing California or EU users to use their platform.

AI Queries as Sensitive Personal Information Under CCPA

The sensitive personal information provisions of CCPA are particularly relevant here because AI search queries routinely capture data in explicitly protected categories.

CCPA defines sensitive personal information to include:

“Personal information that reveals… [a consumer’s] racial or ethnic origin, religious or philosophical beliefs, or union membership; … the contents of a consumer’s mail, email, and text messages… a consumer’s genetic data; the processing of biometric information for the purpose of uniquely identifying a consumer; personal information collected and analyzed concerning a consumer’s health; personal information collected and analyzed concerning a consumer’s sex life or sexual orientation.”

A typical AI assistant conversation regularly elicits all of these categories. Users ask AI assistants about:

- Medical symptoms, medications, health conditions (health information)

- Religious practices, ethical frameworks, dietary restrictions (religious beliefs)

- Financial situations, debt, bankruptcy (financial information)

- Relationship status, sexual health (personal/sexual information)

- Immigration status (protected status)

Under CCPA’s sensitive personal information provisions, businesses are limited to using this data “for the purposes for which it was collected” — providing the requested service — and must offer users an additional right to limit its use.

The compliance implication: If Perplexity collected sensitive personal information via AI queries and transmitted it to Meta and Google for advertising or analytics purposes, this potentially violates CCPA’s sensitive personal information use limitations, not just the general sharing prohibition.

For businesses deploying AI tools: If your employees are using AI assistants that process customer or patient information, you need to ensure those tools’ data use is limited to the service purpose and doesn’t include third-party sharing for advertising or analytics.

Regulatory Trend: AI Privacy Enforcement in 2026

This lawsuit doesn’t exist in a vacuum. It’s part of an accelerating regulatory trend in 2026 that compliance programs need to anticipate.

California Privacy Protection Agency (CPPA) AI Focus

The CPPA (the agency that enforces CCPA) has indicated that AI systems are a priority enforcement area in 2026. The agency’s rulemaking agenda includes:

- Automated decision-making technology rules — How AI decisions affecting consumers must be disclosed and what opt-out rights apply

- AI training data CCPA compliance — When the use of consumer data to train AI models requires consumer notice and consent

- AI-specific sensitive personal information rules — Proposed rules treating AI query data involving health, financial, or other sensitive topics as automatically subject to heightened protection

If these rules are finalized in their current form, AI platforms collecting sensitive query data without heightened consent and disclosure mechanisms will face enforcement risk beyond this private lawsuit.

Federal ADPPA Implications

The American Data Privacy and Protection Act (ADPPA) has been progressing through Congress in 2026. The current draft includes provisions that would:

- Create a federal private right of action for privacy violations (currently only a few states including California have this)

- Establish a national standard for sensitive personal information, including AI-generated inferences

- Require affirmative consent for targeted advertising that uses sensitive personal information

If ADPPA passes in its current form, the type of tracking alleged in the Perplexity lawsuit would potentially create federal liability for all U.S. users, not just California residents.

EU AI Act Interactions

For compliance officers at organizations with EU operations: the EU AI Act, now in phased implementation, classifies certain AI systems as “high-risk” when they handle sensitive categories of personal data. AI search and assistant tools that process health, financial, or other GDPR-sensitive data in the EU may require conformity assessments under the AI Act, including documentation of data minimization practices.

The Perplexity lawsuit will be watched closely by EU data protection authorities as they develop enforcement positions on AI data practices.

The Disclosure Adequacy Problem

One of the central legal questions in this case will be whether Perplexity’s privacy policy adequately disclosed the practices at issue. CCPA requires disclosures to be “clear and conspicuous” — but what does that mean for server-side tracking that most users don’t know exists?

The current state of AI privacy disclosures:

Most AI platform privacy policies describe data use in general terms:

- “We may use your data to improve our services”

- “We work with trusted third-party analytics providers”

- “We may share data with partners for service delivery”

None of these formulations clearly describes: “When you type a query, we simultaneously transmit that query to Meta’s servers via a server-side API for advertising purposes.”

The CCPA “clear and conspicuous” standard has been interpreted by the CPPA to require disclosures that are “reasonably understandable” to the average consumer. There is a strong argument that burying server-side analytics disclosure in broadly-worded privacy policy language doesn’t satisfy this standard.

For compliance programs: This case may establish clearer disclosure standards for server-side AI analytics. Proactively, organizations building AI tools should:

- Enumerate every third-party platform that receives user data, including server-side analytics vendors

- Explain in plain language why data is shared with each vendor

- Provide a clear mechanism to opt out of non-essential sharing before users begin using the service

- Obtain affirmative consent before sharing sensitive personal information with third parties

📖 For the consumer-focused guide to protecting yourself from AI tracking and using private alternatives, see: Incognito Mode Won’t Save You from AI — My Privacy Blog

Business Liability: If You’re Using AI Tools That Track Users

The Perplexity lawsuit has direct implications for businesses that deploy AI tools to serve their customers or employees — even if Perplexity is the defendant.

Scenario 1: Your Business Uses Perplexity or Similar AI Tools for Customer-Facing Functions

If you’ve integrated Perplexity (or any AI tool with undisclosed tracking) into a customer-facing application, you may share liability for the data flows:

- Your customers’ data was processed through Perplexity pursuant to your use of their platform

- If Perplexity transmitted that data to Meta/Google without disclosure, your customers were affected while using your service

- Your customer-facing privacy policy likely didn’t disclose this third-party sharing because you didn’t know it was happening

- CCPA’s service provider relationship requires that you contractually prohibit service providers from retaining or using personal information for purposes other than providing the contracted services

Risk mitigation:

- Audit all AI tools integrated into customer-facing applications for their data sharing practices

- Review your DPAs with AI vendors for explicit prohibitions on unauthorized third-party sharing

- Ensure your customer-facing privacy policy accurately reflects actual data flows, including AI tool data sharing

- Consider requiring AI vendors to provide technical attestation of their data flow architecture

Scenario 2: Your Employees Are Using AI Tools to Process Customer or Patient Data

Many organizations have employees using AI assistants like Perplexity, ChatGPT, or Copilot to help with work tasks — including tasks involving customer data, patient data, or employee data.

If an employee uses Perplexity to draft a response to a customer complaint and pastes customer data into the query, and Perplexity was transmitting those queries to Meta, your organization has a potential CCPA (and possibly HIPAA) exposure — even though you’re not a Perplexity customer in the traditional sense.

Risk mitigation:

- Establish an AI tool use policy for employees that prohibits using non-approved AI tools with personal data

- Create an approved AI tool list based on privacy assessments of each tool’s data flows

- Train employees on what types of data can and cannot be input into AI assistants

- Implement technical controls (DLP policies, network-level blocking) to enforce the policy

Scenario 3: You’re an AI Platform Operator

If you operate an AI platform or tool that processes user queries:

- Review your server-side analytics integrations immediately

- Ensure every third-party recipient of user query data is explicitly disclosed in your privacy policy

- Verify that server-side data sharing can be controlled by your opt-out mechanisms

- Ensure sensitive personal information provisions of CCPA are implemented in your data flows — not just in policy

- Obtain privacy counsel review of your disclosure practices in light of this lawsuit

Compliance Action Checklist

Immediate Actions (Within 30 Days)

Privacy Policy Audit:

- Review your AI tool vendors’ privacy policies for server-side analytics disclosures

- Identify any AI vendors transmitting user data to advertising platforms (Meta Pixel, Google Analytics) without explicit user consent

- Verify your own privacy policy accurately reflects all data flows, including server-side analytics

Vendor Due Diligence:

- Add server-side tracking questions to vendor privacy assessments

- Request updated DPAs from AI vendors explicitly prohibiting unauthorized third-party sharing

- Identify which AI tools your employees use with personal data and assess each for CCPA compliance

Employee AI Use Policy:

- Establish or update your AI tool use policy to address data classification restrictions

- Identify approved AI tools based on privacy assessments

- Implement training on what data can be input into AI assistants

Short-Term Actions (30–90 Days)

Technical Controls:

- Implement DLP controls that prevent sensitive data from being sent to unapproved AI platforms

- For customer-facing AI integrations, conduct a data flow audit to verify all third-party sharing

- Evaluate whether server-side tracking in your own applications is appropriately disclosed and consented to

Legal and Regulatory Monitoring:

- Monitor this lawsuit for settlement terms or court rulings that establish disclosure standards

- Track CPPA rulemaking on AI and automated decision-making technology

- Assess ADPPA progress for potential federal private right of action implications

- For EU operations, assess AI Act applicability to AI tools processing sensitive personal data

Board and Executive Reporting:

- Brief your privacy officer, legal counsel, and CISO on this lawsuit and its implications

- Add AI tool vendor risk to your privacy risk register

- Establish a process for evaluating new AI tool adoptions through a privacy lens

The Precedent That Could Change AI Privacy

The most significant compliance implication of this lawsuit may not be about Perplexity specifically — it’s about the legal framework it could establish for AI privacy more broadly.

If the court rules for plaintiffs on the server-side tracking theory:

- Disclosure standard clarified — All AI platforms must explicitly disclose server-side third-party data sharing in plain language, not buried in generic privacy policy language

- Sensitive query data triggers heightened CCPA protections — AI queries containing health, financial, or other sensitive information will be treated as sensitive personal information requiring opt-in consent for sharing

- Incognito mode creates a reasonable expectation — Courts may recognize that users in private browsing mode have a reasonable expectation that their sessions are not being tracked, creating liability for platforms that track anyway

If the court rules against plaintiffs:

- Existing CCPA disclosure standards may be deemed sufficient for server-side analytics — which would be a significant setback for AI privacy

- Private browsing mode expectations reduced — courts affirm that users bear responsibility for understanding technical limitations of their privacy tools

- Enforcement focus shifts to CPPA — plaintiff attorneys may focus energy on supporting CPPA regulatory action rather than private litigation

Either way, this lawsuit marks a turning point in how privacy law is applied to AI tools. The practices alleged — collecting sensitive AI search queries and sharing them with advertising platforms without clear disclosure — will face heightened legal scrutiny from this point forward.

Compliance programs that get ahead of this now — by auditing AI tool vendors, strengthening DPAs, and implementing clear disclosure practices — are better positioned than those that wait for enforcement.

Conclusion: AI Vendor Risk Is Now CCPA Risk

The Perplexity lawsuit is a watershed moment for AI privacy compliance. It establishes — through litigation, if not yet through final judgment — that the standard for AI tool privacy disclosures must meet the same CCPA standards as any other data collection practice: clear, conspicuous, and specific.

For compliance officers, the takeaways are clear:

- AI tools are data processors for CCPA purposes — They require the same vendor due diligence and DPA requirements as any other personal data processor

- Server-side tracking is in scope — CCPA doesn’t distinguish between client-side and server-side data sharing; the requirement is adequate disclosure and opt-out rights regardless of the technical mechanism

- Sensitive AI query data deserves heightened protection — The fact that users ask AI tools about health, finances, and personal matters doesn’t reduce their privacy rights; it heightens them

- Employee AI tool use is an organizational risk — If your employees are processing personal data through AI tools, your organization may share exposure for those tools’ privacy practices

The intersection of AI capabilities and privacy law is moving fast. The organizations that treat this as a compliance priority today will be better positioned as regulatory and legal standards continue to evolve.

References

- Bloomberg: Perplexity AI accused of sharing data with Meta, Google — https://www.bloomberg.com/news/articles/2026-04-01/perplexity-ai-machine-accused-of-sharing-data-with-meta-google

- LiveMint coverage — https://www.livemint.com/technology/tech-news/perplexity-ai-accused-of-embedding-undetectable-trackers-for-secretly-routing-sensitive-user-data-to-meta-and-google-11775013680758.html

- California Consumer Privacy Act (CCPA) — Full Text — https://oag.ca.gov/privacy/ccpa

- California Electronic Communications Privacy Act (CalECPA) — https://leginfo.legislature.ca.gov/faces/billNavClient.xhtml?bill_id=201520160SB178

- CPPA: Automated Decision-Making Technology Rulemaking — https://cppa.ca.gov/regulations/

- NIST AI Risk Management Framework — https://www.nist.gov/artificial-intelligence

- EU AI Act — Official Text — https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32024R1689

ComplianceHub.wiki provides resources for compliance officers, risk managers, and legal counsel navigating the intersection of technology and regulation. Content is educational and does not constitute legal advice. Consult qualified legal counsel for organization-specific guidance.