Two of Silicon Valley’s biggest scandals of 2026 were always on a collision course. It just took a class action complaint filed in the Northern District of Texas to make the connection explicit.

On one side: Mercor, a $10 billion AI recruiting startup that supplies domain experts to OpenAI, Anthropic, and Meta for model training. On the other: Delve Technologies, a Y Combinator-backed compliance automation startup accused of fabricating hundreds of SOC 2 and ISO 27001 certifications. In between: LiteLLM, an open-source Python library downloaded 95 million times per month, whose compromised PyPI packages gave attackers the keys to Mercor’s kingdom.

The First Amended Class Action Complaint — White and Beltran v. Mercor.io Corporation, Delve Technologies, Inc., and Berrie AI Incorporated (Case No. 6:26-CV-00143-H) — doesn’t just allege a data breach. It alleges a systemic chain of negligence and fraud spanning fake compliance certifications, invasive workplace surveillance sold to LLM companies, and a supply chain attack that exposed the biometric data, video interviews, and identity documents of tens of thousands of contractors.

This is the story of how the AI industry’s trust infrastructure collapsed in a single month.

The Breach: 40 Minutes, 4 Terabytes

On March 27, 2026, a threat actor group known as TeamPCP — a hacking collective with suspected nation-state connections — compromised the PyPI publishing credentials for the LiteLLM library and injected a three-stage malicious backdoor into versions 1.82.7 and 1.82.8.

TeamPCP didn’t start with LiteLLM. The group had previously compromised Trivy, a widely used open-source security scanner maintained by Aqua Security, as part of a broader campaign targeting CI/CD pipelines — the same category of supply chain attack strategy that groups like Cl0p have used to devastating effect against enterprise software vendors. Through that attack, they obtained credentials belonging to a LiteLLM maintainer — and used them to publish poisoned packages directly to PyPI.

The malicious code was designed to harvest authentication credentials and establish persistent system access. Version 1.82.7 embedded base64-encoded malware directly into the library’s proxy server code, executing on import. Version 1.82.8 went further, using .pth file injection to execute on every Python interpreter startup — meaning the malware persisted even after the package itself was removed.

The poisoned packages were live for roughly 40 minutes before security researcher Callum McMahon of FutureSearch discovered the compromise when his machine crashed from a fork-bomb side effect. PyPI quarantined the entire litellm package by 13:38 UTC.

Forty minutes was enough.

With 3.4 million daily downloads, thousands of systems pulled the compromised packages automatically. LiteLLM is estimated to be present in 36% of all cloud environments. According to Wiz, credentials and secrets stolen in the supply chain compromise were quickly validated and used to explore victim environments and exfiltrate additional data.

Mercor was one of those victims.

On or about March 30, 2026, criminal hackers exfiltrated approximately four terabytes of sensitive personal data from Mercor’s systems. The stolen cache reportedly comprised: a 211-gigabyte user database, 939 gigabytes of platform source code, approximately three terabytes of storage buckets containing video interviews and identity verification passports, TailScale VPN configuration data, internal Slack communications, and ticketing system data.

The extortion group Lapsus$, which security researchers say partnered with TeamPCP to monetize the stolen access, claimed responsibility and began auctioning the data on dark web forums. Mercor spokesperson Heidi Hagberg confirmed the company experienced a security incident, characterizing it as affecting “one of thousands of companies” impacted by the LiteLLM compromise.

But the class action complaint argues this framing understates Mercor’s culpability — and traces the root cause back further than TeamPCP.

The Compliance Theater: Delve’s “Fake Compliance as a Service”

The complaint’s most explosive allegations concern Delve Technologies, a compliance automation startup founded in 2023 by MIT dropouts Karun Kaushik (CEO) and Selin Kocalar (COO). Both were Forbes 30 Under 30 honorees. The company graduated from Y Combinator’s Winter 2024 batch, raised a $32 million Series A led by Insight Partners in July 2025 at a $300 million valuation, and claimed over 1,500 customers across 50 countries.

Delve’s pitch was irresistible to cash-strapped startups racing to close enterprise deals: AI-powered compliance that could compress certification timelines from months to days, with bundled SOC 2, ISO 27001, and HIPAA packages priced as low as $6,000 to $15,000 — a fraction of traditional audit costs.

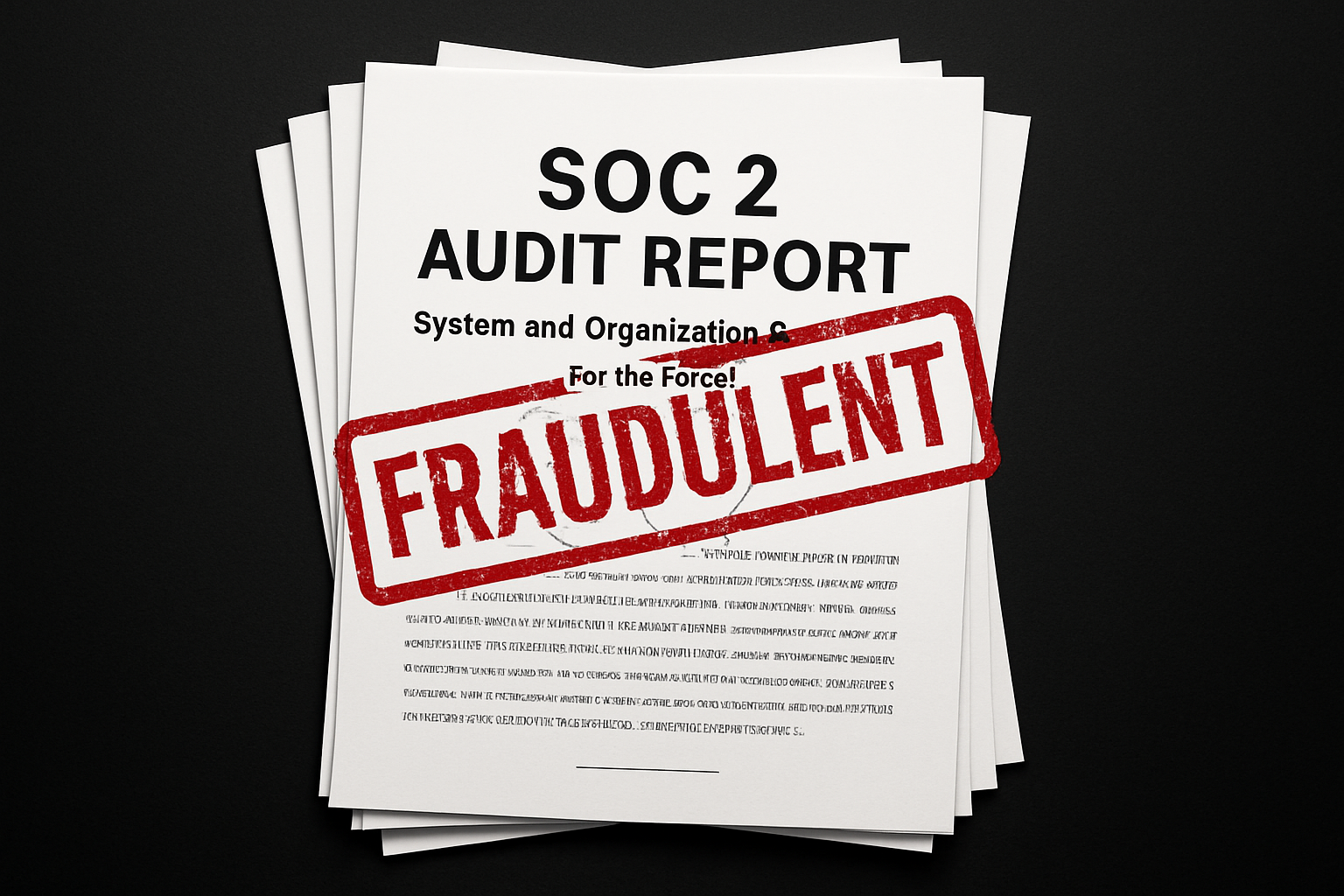

The problem, according to whistleblower evidence and the class action complaint, is that the speed came from fabrication, not automation.

The complaint alleges that beginning in or around 2023 and continuing through at least March 2026, Delve systematically fabricated compliance certifications for its customers. An investigation published in March 2026 revealed that Delve’s compliance reports were generated from identical templates with pre-written auditor conclusions — prepared before customers provided any evidence of actual compliance.

At least 494 fabricated compliance reports have been identified. According to the complaint, Delve generated fake evidence of board meetings, security tests, and compliance processes that never occurred. Delve utilized certification firms that rubber-stamped reports without conducting legitimate audits.

The whistleblower — an anonymous Substack author operating under the pseudonym “DeepDelver” — published a series of explosive posts beginning on March 18, 2026. DeepDelver described themselves as an employee at a former Delve client who, along with other suspicious customers, pooled resources to investigate after Delve notified clients about a leaked spreadsheet containing confidential reports.

Their conclusion: Delve achieved its speed claims by producing fake evidence, generating auditor conclusions on behalf of certification mills, and skipping major framework requirements while telling clients they had achieved full compliance.

The second wave of whistleblower evidence, released on March 28, included internal recordings, screenshots, and Slack communications. The most legally significant content was a recorded conversation between CEO Kaushik and an employee in which Kaushik questioned whether Delve’s audit partner had reviewed any evidence — and then expressed comfort with that arrangement because liability would attach to the auditor, not to Delve.

On April 3, Y Combinator removed Delve from its companies directory. YC President Garry Tan stated: “The founders in our community have to trust each other, and we have to trust them. When that trust breaks down, there’s really only one thing to do.”

Insight Partners quietly scrubbed its investment thesis article about Delve from its website. Delve’s media inquiry email stopped functioning.

The Chain: Delve → LiteLLM → Mercor

Here is where the class action draws the connection that makes this case unprecedented.

LiteLLM obtained its SOC 2 and ISO 27001 certifications through Delve. LiteLLM prominently displayed these Delve-obtained certifications on its website and marketing materials, representing to customers and downstream users that it maintained robust security controls in compliance with internationally recognized standards.

These representations were material to the decisions of downstream users — including Mercor — to integrate LiteLLM into their technology infrastructure.

The complaint alleges these representations were false or misleading. LiteLLM’s security certifications were obtained through a provider that fabricated compliance reports, and LiteLLM’s actual security posture was insufficient to prevent the compromise.

Following the discovery of the compromise, LiteLLM severed ties with Delve and announced it would pursue recertification through Vanta, a legitimate compliance platform, with an independent third-party auditor. The complaint characterizes this remedial action as an implicit acknowledgment that Delve-obtained certifications were inadequate.

Companies processing protected health information — including companies in the healthcare, AI, and fintech sectors — had relied upon Delve’s fabricated HIPAA, SOC 2, and ISO 27001 certifications when representing to their own customers, users, and partners that sensitive data would be handled securely. In an era where the global compliance landscape is already straining under unprecedented regulatory complexity, fabricated certifications don’t just create legal exposure for the companies holding them — they undermine the entire framework of trust that regulators, customers, and partners depend on.

The chain of trust was rotten at its foundation.

The Surveillance: Insightful, Screen Captures, and LLM Training Data

The complaint then pivots to what may be its most disturbing set of allegations: Mercor’s use of invasive screen monitoring software on its contractors, and the subsequent sale of that captured data to AI companies for model training.

According to the complaint, Mercor required contractors to operate monitoring software during active work sessions, including an application called Insightful (formerly Workpuls), that randomly captured screenshots of contractors’ computer screens at periodic intervals throughout the workday.

These random screen captures indiscriminately recorded whatever was displayed on a contractor’s screen at the moment of capture — including proprietary source code, trade secrets, and confidential business information belonging to the contractors’ primary employers or other clients; personal email correspondence, private messages, and social media content; banking, financial, and medical information visible in browser windows or applications; and attorney-client privileged communications.

The complaint alleges Mercor collected, stored, and retained these screen captures without adequate disclosure to contractors regarding the scope of data being collected, without obtaining informed consent from the owners of the proprietary data captured in the screenshots, and without implementing reasonable safeguards to prevent the capture and retention of data beyond the scope of the contractors’ Mercor engagements.

Then comes the data pipeline allegation: Mercor sold, licensed, or otherwise provided contractor data — including personal information, recorded interviews, Insightful screen captures, and work product — to unnamed “John Doe Defendants” for use in training and developing large language models. The screen capture data thereby transmitted to these defendants contained proprietary information belonging to third parties who had no knowledge of or relationship with Mercor.

The complaint further alleges a contractual contradiction that borders on the absurd: Mercor required contractors to execute agreements prohibiting them from improperly using or disclosing confidential, proprietary, or secret information of their other employers. Yet Mercor’s own screen monitoring software was designed to indiscriminately capture exactly such information from contractors’ screens. The company imposed contractual obligations on its contractors that its own surveillance practices were engineered to circumvent.

The Lawsuits Pile Up

The White and Beltran complaint filed in the Northern District of Texas is not the only legal action Mercor is facing. Five contractor lawsuits were filed against Mercor in federal courts in California and Texas during the first week of April 2026. For a case-by-case breakdown of every complaint, the legal theories behind each filing, and what compliance professionals should watch for as the litigation develops, see our companion analysis: Five Lawsuits and Counting: A Compliance Breakdown of the Mercor Data Breach Litigation.

The lead California case, Gill v. Mercor.io Corporation, was filed April 1 in the Northern District of California as a proposed nationwide class action. Plaintiff Lisa Gill, a Hawaii resident, alleges Mercor failed to implement multi-factor authentication, encrypt sensitive data during storage and transmission, limit access to PII, monitor systems for suspicious activity, or rotate passwords regularly.

The Esson v. Mercor complaint, brought by contractor NaTivia Esson, who worked for Mercor from March 2025 through March 2026, alleges she submitted W-9 forms with personal identifying information each time she received work — trusting the company would use reasonable measures to protect it.

What makes the White and Beltran Texas complaint unique is its scope: it names not just Mercor but also Delve Technologies, BerriAI (LiteLLM’s creator), and “John Doe LLM Companies 1-10” — the unnamed AI labs that allegedly received contractor data harvested through Mercor’s surveillance practices. It is the first complaint to explicitly connect the compliance fraud, the supply chain attack, the contractor surveillance, and the LLM training data pipeline into a single theory of liability.

The Fallout

The downstream consequences are still unfolding.

Meta indefinitely paused all work with Mercor — significant given that Mercor was one of a few key firms generating bespoke, proprietary training data for Meta’s AI models, including for TBD Labs, Meta’s core unit working toward AI superintelligence. OpenAI confirmed it was investigating its exposure but had not paused contracts at the time. Anthropic has not publicly commented. Google is understood to be assessing the breach’s scope.

YC CEO Garry Tan characterized the potential exposure as representing “billions and billions of value and a major national security issue,” noting that an extraordinary amount of state-of-the-art training data was now potentially available to foreign adversaries.

Threat intelligence firms estimate that TeamPCP’s broader campaign — spanning the Trivy, LiteLLM, KICS, and Telnyx compromises — has affected over 1,000 SaaS environments and potentially 500,000 machines. Mandiant Consulting CTO Charles Carmakal confirmed at RSA Conference that the Google-owned incident response firm knew of over 1,000 impacted cloud environments that were actively dealing with the cascading fallout.

For the compliance industry, the Delve scandal has exposed structural questions about the GRC automation market. Delve’s business model depended on producing certifications at a speed and price point that were incompatible with genuine compliance assessment — and it intended those certifications to be relied upon by its customers and by the downstream users and consumers of its customers’ services. For a deeper technical analysis of how Delve’s rubber-stamped certifications enabled the LiteLLM breach specifically, see our companion piece: The Illusion of Trust: How LiteLLM’s Fake SOC 2 and Backdoored Security Scanner Exposed Enterprise Compliance Theater.

What This Means for Security Practitioners

The Mercor-Delve-LiteLLM chain of events is not a single-point failure. It is a systems failure spanning three intersecting domains: open-source software supply chain security, compliance certification integrity, and AI infrastructure governance.

The practical lessons are already crystallizing.

Pin your dependencies. Organizations using lockfiles with poetry.lock or uv.lock were completely protected from the malicious LiteLLM packages. Those relying on mutable version tags or unpinned references inherited the full attack chain. The OWASP LLM Top 10 elevated supply chain vulnerabilities from position five to position three in its 2025 edition for a reason. The PyPI poisoning pattern mirrors what we’ve seen across the npm ecosystem — package registries remain the softest target in the software supply chain.

Verify compliance independently. A SOC 2 badge is only as good as the auditor behind it. If your vendor obtained certifications through a platform offering bundled SOC 2, ISO 27001, and HIPAA for $6,000 in under 60 days, that should have been a red flag. Request audit partner details. Verify the auditor’s AICPA accreditation independently. Review the actual report, not just the trust page.

Audit your monitoring tools’ data scope. If your organization requires contractors or employees to run screen capture software, understand exactly what data is being captured, who has access to it, and whether you’re inadvertently ingesting third-party proprietary information that creates legal liability.

Understand your position in the supply chain. Mercor didn’t use Delve directly. LiteLLM did. But Mercor relied on LiteLLM, which relied on Delve’s certifications to represent its security posture. Third-party risk management must account for nth-party dependencies, not just direct vendor relationships.

The AI industry built its most valuable intellectual property on top of an interconnected web of data vendors, open-source tools, and shared infrastructure — and that web now constitutes an attack surface that no single company fully controls. The Mercor breach is the first major proof that when that web fails, the consequences cascade at a speed and scale the industry was not prepared for.

This article is based on publicly available court filings, media reporting from TechCrunch, SecurityWeek, The Register, Fortune, Cybernews, Wired, The Record, and public statements from the parties involved. The allegations in the class action complaints are unproven and represent the plaintiffs’ claims. Mercor, Delve, and BerriAI have not been found liable for any of the allegations described. This article is provided for informational purposes only and does not constitute legal advice.