Executive Summary

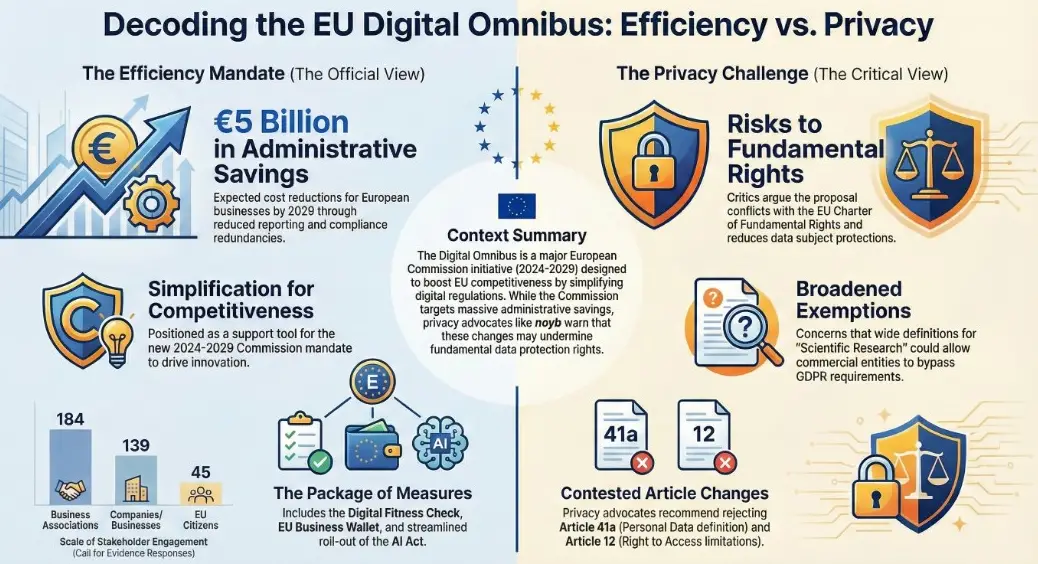

This briefing document provides a synthesized analysis of the European Commission’s proposed “Digital Omnibus” regulation, which seeks to amend the General Data Protection Regulation (GDPR) and ePrivacy rules. The analysis, conducted by the organization noyb, concludes that the proposal is deeply flawed, presenting a significant threat to fundamental data protection rights within the European Union.

The central thesis of the analysis is that the Digital Omnibus, far from achieving its stated goals of simplification and clarification, introduces profound legal uncertainty, reduces the rights of data subjects, and creates systemic conflicts with the EU’s Charter of Fundamental Rights and established case law from the Court of Justice of the European Union (CJEU). The proposal is heavily criticized for being developed without a proper impact assessment, stakeholder consultation, or evidence collection, with many problematic changes appearing at the last minute.

Key findings include:

- Erosion of Core Definitions: The proposed change to the definition of “personal data” in Article 4(1) introduces a subjective standard that would make the application of the GDPR dependent on the internal capabilities and intentions of individual data controllers. This would cripple enforcement, render data subjects’ rights practically meaningless, and create massive legal uncertainty for businesses.- Creation of Dangerous Loopholes: The introduction of an overly broad definition for “scientific research” in Article 4(38) and new exceptions for processing sensitive data for Artificial Intelligence (AI) in Article 9 threaten to create massive loopholes. These changes would allow purely commercial activities to bypass core GDPR principles like purpose limitation and data minimization, effectively nullifying fundamental rights under the guise of “innovation.”- Conflict with Fundamental Rights: Multiple proposals are identified as being in direct conflict with Article 8 of the EU Charter of Fundamental Rights and the “necessary and proportionate” standard for limiting such rights. The analysis argues that several amendments reduce protections below the minimum standard set by previous EU law and would likely be annulled by the CJEU.- Contradiction of CJEU Case Law: The Commission’s proposals are found to selectively interpret or directly contradict a significant body of CJEU rulings that have consistently favored a broad and protective interpretation of data protection principles.

Ultimately, noyb recommends the outright rejection of the most significant and damaging amendments, including those concerning the definitions of personal data and scientific research, and the new allowances for AI. It is argued that only a few minor changes offer potential benefits, and even these require improvement. The document urges the EU legislator to conduct a profound scrutiny of the proposal and to leverage the ongoing “Digital Fitness Check” as the appropriate venue for a thorough, evidence-based examination of any necessary reforms.

Download: digitalomnibus_gdpr_eprivacy-compressed digitalomnibus_gdpr_eprivacy-compressed.pdf3 MB.a{fill:none;stroke:currentColor;stroke-linecap:round;stroke-linejoin:round;stroke-width:1.5px;}download-circle

Article 4(38): Definition of “Scientific Research”

Proposal Overview: The Commission proposes to introduce a new, legally binding, and extremely broad definition of “scientific research” into Article 4. The proposed text defines it as “any research which can also support innovation,” including “technological development and demonstration” and “apply[ing] existing knowledge in novel ways.” Activities falling under this definition would benefit from significant exemptions from core GDPR obligations, such as purpose limitation and data subject rights.

Core Criticisms & Analysis:

- Creation of a Massive Loophole: The definition is so vague that it could be abused to shield purely commercial processing activities from GDPR scrutiny. The connection to “innovation” is tenuous—a possibility (“can”) of a byproduct (“also”) that “supports” innovation is sufficient. This could allow big tech companies to claim that their product development or marketing analysis constitutes “scientific research.”- Conflict with the Charter of Fundamental Rights: The broad exemptions granted for “research” would effectively waive fundamental rights guaranteed under Article 8 of the Charter, including purpose limitation and the right to erasure. Such a blanket allowance for a poorly defined purpose is unlikely to meet the “necessary and proportionate” test required by Article 52(1) of the Charter for limiting fundamental rights. Furthermore, outsourcing the limitation of rights to private “ethical standards” violates the principle that such limitations must be provided for by law.- Poor Legal Quality: The definition contains over 20 vague and partly contradictory criteria. It conflates research with commercial application and tilts the definition heavily toward “technical development,” potentially excluding legitimate academic research in humanities or natural sciences that is not aimed at “innovation.” This devalues the work of the scientific community.- Systemic Conflicts: The new definition would likely conflict with various national laws that Member States have already passed to implement the research provisions in Article 89 of the GDPR.- **Impact on Stakeholders:**Data Subjects: Would see their rights under numerous GDPR articles (e.g., Articles 15, 16, 17, 18, 21) massively limited or abolished whenever a controller claims a “research” purpose.- Controllers: While some may abuse the loophole, others face increased legal uncertainty. Actual academic researchers may find their work excluded from the privileges if it doesn’t align with the “innovation” focus.- Supervisory Authorities (SAs): Would be tasked with evaluating matters far outside their competence, such as the methodological quality of research or adherence to industry-specific ethical codes, adding to their already strained resources.

Recommendation: Reject This “last minute” addition to the Omnibus creates a foreseeable and massive loophole for big tech and other actors. It has a direct and severe impact on Charter rights, is poorly drafted, and may even harm legitimate scientific research. Any need for a harmonized definition should be examined thoroughly within the “Digital Fitness Check” with proper expert input.

Article 9(2)(k) & (5): AI and Sensitive Data

Proposal Overview: The proposal introduces a new legal basis in Article 9(2)(k) to permit the processing of special categories of personal data (sensitive data) for the “development and operation” of AI systems. A new Article 9(5) sets conditions, requiring controllers to implement measures to avoid collecting sensitive data. If such data is identified, it must be removed, unless doing so requires “disproportionate effort.” In that case, the controller must simply protect the data from being used in outputs or disclosed.

Core Criticisms & Analysis:

- Conflict with the Charter of Fundamental Rights: This creates a significant limitation on the protections for sensitive data under Article 8 of the Charter without the required proportionality assessment. The proposal’s recital text appears to conduct a “reverse proportionality test,” focusing only on not disproportionately hindering AI developers, which is described as “unheard of.” It also abandons the GDPR’s technology-neutral approach by creating a special privilege for AI.- Weak and Unenforceable Protections: The proposed safeguards are vague and riddled with loopholes.The obligation to remove sensitive data is nullified by the “disproportionate effort” exception, a term that controllers have historically used to render similar obligations meaningless.- The terms “avoid,” “appropriate measures,” and “effectively protect” lack clear, objective standards, making them practically unenforceable for data subjects and SAs. Poor Legal Quality & Systemic Conflicts: The proposal uses the extremely broad definition of an “AI system” from the AI Act. While intended to be protective in the AI Act, using this definition for a legal exemption in the GDPR creates an exceptionally broad privilege. The protection offered under Article 9(5) appears even weaker than the general data minimization principle in Article 5(1)(c), creating internal inconsistencies within the GDPR. Recitals justifying the processing of data that is “not necessary” show “structural intellectual and analytical errors” that conflict with core GDPR and Charter principles.Impact on Stakeholders:

- Data Subjects: Would have no realistic way to enforce these weak protections, given the technical complexity of AI, confidentiality claims by developers, and the vague legal language.- Controllers: While large or aggressive players may exploit the legal uncertainty, SMEs could face a more complex and risky legal situation.- Supervisory Authorities (SAs): Would face deep, resource-intensive technical investigations into AI data pipelines to assess vague standards like “disproportionate effort,” making effective oversight highly unlikely.

Recommendation: Reject The proposed changes fail to solve existing problems for controllers while creating massive legal uncertainty and undermining the high level of protection for sensitive data. The provisions are inconsistent with the GDPR’s structure and the fundamental rights protected by the EU Charter.