In a public confrontation that has no precedent in the history of U.S. defense contracting, Anthropic CEO Dario Amodei published a formal statement today refusing to comply with demands from the Department of Defense — now operating under the Trump administration’s renaming as the “Department of War” — to remove safety restrictions from its Claude AI models.

The standoff has significant implications not just for Anthropic, but for every compliance officer, CISO, and legal team navigating the increasingly fraught terrain of AI governance in 2026. Read the full Anthropic statement here →

What the Pentagon Is Demanding

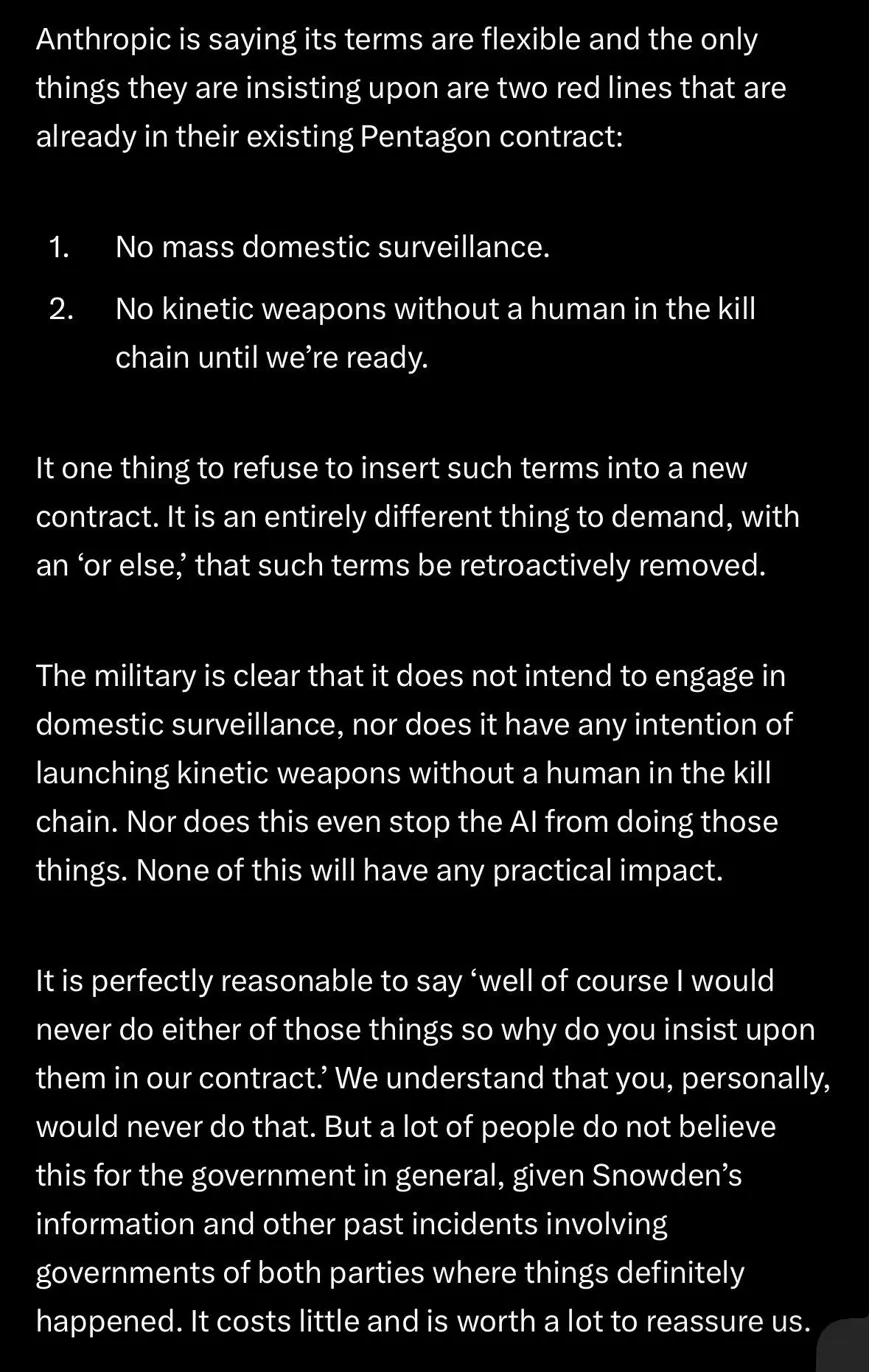

According to Amodei’s statement, the Department of War issued a directive that AI companies must accede to “any lawful use” of their technology — and must remove guardrails that currently prevent two specific capabilities from being deployed in military contexts:

1. Mass domestic surveillance. The DoW is seeking the ability to use Claude to aggregate publicly available data — location records, web browsing history, association maps — at scale and without warrants, assembling what Amodei describes as “a comprehensive picture of any person’s life—automatically and at massive scale.”

1. Mass domestic surveillance. The DoW is seeking the ability to use Claude to aggregate publicly available data — location records, web browsing history, association maps — at scale and without warrants, assembling what Amodei describes as “a comprehensive picture of any person’s life—automatically and at massive scale.”

2. Fully autonomous lethal weapons. The department wants Claude deployed in weapons systems that select and engage targets without a human in the loop.

Anthropic declined both. The company stated these two restrictions have been in place throughout its existing DoD contracts and have not, to its knowledge, impeded any military operations to date.

The Threats on the Table

The Department of War’s pressure campaign escalated to explicit threats that Anthropic disclosed publicly:

- Removal from military systems if the safeguards are maintained- Designation as a “supply chain risk” — a classification previously reserved exclusively for adversarial foreign entities, never applied to a U.S. company- Invocation of the Defense Production Act to force the removal of safety restrictions

Amodei noted the inherent contradiction in the final two threats: one labels Anthropic a security risk; the other declares Claude essential to national security. Politico called the dual threat “inherently contradictory.”

CBS News reported that Defense Secretary Pete Hegseth gave Anthropic until Friday at 5 p.m. to grant unrestricted use of its technology — a deadline Anthropic appears to have let pass without capitulation.

Anthropic’s Week From Hell: Pentagon Threats, Abandoned Safety Pledges, and Critical Vulnerabilities

Why This Matters for Compliance Professionals

This situation is not simply a business dispute between a tech company and its largest government customer. It is a case study in every core tension that AI governance frameworks are struggling to resolve right now.

The “Lawful Use” Problem

The DoW’s “any lawful use” standard is a compliance concept that sounds reasonable until you examine it closely. As Amodei points out, mass domestic surveillance of the kind the DoW is pursuing may be technically legal today — not because it has been authorized, but because legislation has not yet caught up with what AI now makes possible. The Intelligence Community itself has acknowledged in declassified reporting that the warrantless purchase of Americans’ commercial data raises serious privacy concerns.

This is precisely the gap that regulators in Europe have moved to close. Under the EU AI Act’s risk-based framework — now in active enforcement through the European AI Office — AI systems used for mass surveillance by public authorities are classified as presenting unacceptable risk and are prohibited outright. The U.S. has no equivalent federal standard. For multinational organizations building AI compliance programs, the divergence is significant. Our 2025 Global Digital Privacy, AI, and Human Rights Landscape briefing covers this regulatory gap in depth.

The Autonomous Weapons Reliability Standard

Anthropic’s position on fully autonomous weapons is not ideological — it is grounded in a technical argument: current frontier AI systems are not reliable enough to make lethal targeting decisions without human oversight. The company offered to collaborate with the DoW on R&D to improve reliability. The department declined.

This is a compliance and liability argument as much as an ethical one. Any organization deploying AI for high-stakes decision-making — lethal or otherwise — faces an equivalent version of this question: at what reliability threshold is it appropriate to remove human review from the loop? The answer has legal, regulatory, and reputational dimensions that go well beyond any single government contract.

For compliance teams managing AI governance under the EU AI Act and emerging U.S. state frameworks, the Anthropic-Pentagon dispute provides a concrete illustration of where “human in the loop” requirements come from — and what happens when they’re contested.

The Supply Chain Risk Designation: A New Compliance Vector

The threat to designate Anthropic a “supply chain risk” introduces a novel compliance risk category that defense contractors and their AI vendors must now account for. The Pentagon has already asked at least two major defense contractors to assess their reliance on Claude. If Anthropic were formally designated a supply chain risk, it would trigger contractual review obligations across the entire DoD vendor ecosystem.

For compliance officers at organizations using Claude — or any AI vendor with potential government exposure — this is a moment to review vendor risk clauses, AI tool dependency inventories, and contingency planning documentation. The DoD’s AI Strategy for the Department of War makes clear that AI is now treated as critical defense infrastructure. That classification cuts both ways.

The Bigger Picture: AI Weaponization and Governance

The timing of this confrontation is notable. It lands on the same week that Bloomberg and Gambit Security disclosed a major breach of nine Mexican government agencies — carried out by a single attacker who used Claude and ChatGPT as an automated hacking arsenal, stealing 150GB of sensitive data including 195 million taxpayer records, voter information, and civil registry files.

That incident demonstrated precisely the dual-use problem Anthropic is trying to manage: the same AI capabilities that make Claude valuable for legitimate intelligence analysis can be turned against government systems with minimal technical expertise and a consumer subscription. The guardrails the DoW wants removed are part of the same safety architecture that — imperfectly — slowed that attacker down.

As we’ve covered in our analysis of AI’s expanding role in psyops, 5th-generation warfare, and cyber espionage, the convergence of AI capability and adversarial intent is accelerating. The question of who controls the guardrails — and under what circumstances they can be removed — is no longer theoretical.

Anthropic was also the first AI company to deploy models in classified government networks, the first at the National Laboratories, and the first to offer custom models for national security customers. The company has also proactively cut off CCP-linked firms and shut down CCP-sponsored cyberattacks targeting Claude — foregoing several hundred million dollars in revenue in the process. This is not a company hostile to national security. It is a company drawing two specific lines.

Anthropic’s Position and What Happens Next

Amodei’s statement leaves the door open to a continued relationship with the DoW — on Anthropic’s terms:

“Our strong preference is to continue to serve the Department and our warfighters—with our two requested safeguards in place. Should the Department choose to offboard Anthropic, we will work to enable a smooth transition to another provider.”

The practical question is whether any competitor — OpenAI, Google DeepMind, Palantir, or defense-native AI vendors — will accept the “any lawful use” standard that Anthropic refused. If they do, it resolves the DoW’s immediate operational need but sets a governance precedent with long-term consequences. If they don’t, the Pentagon faces a more fundamental question about whether frontier AI and military use can coexist without safety constraints.

For compliance professionals, the answer to that question will shape AI governance requirements for years. The global surveillance and digital regulation trends we tracked through early 2026 point toward tighter oversight of exactly the capabilities the DoW is seeking to expand — not looser.

Key Compliance Takeaways

For organizations managing AI governance, vendor risk, and government contracting exposure, this situation highlights several immediate action items:

Review AI vendor contracts for military/government use provisions. If your organization uses Claude or other frontier AI models, understand what use restrictions are built into your agreement and what would change if those restrictions were modified upstream.

Audit your AI inventory against the “autonomous decision-making” threshold. The reliability argument Anthropic is making applies beyond weapons — any AI system making consequential decisions without human review faces equivalent scrutiny under EU AI Act high-risk classifications and emerging U.S. state frameworks.

Monitor the supply chain risk designation outcome closely. A formal DoD designation would create cascading compliance obligations for every contractor with AI tool dependencies. Our comprehensive state privacy and AI compliance guide covers vendor risk management frameworks relevant to this scenario.

Track whether domestic surveillance legal standards change. The Intelligence Community’s acknowledged use of warrantless commercial data purchases is a legal gray area. AI amplification of that capability is what Anthropic is specifically refusing to enable. Regulatory movement in this space — whether through courts, Congress, or executive action — will directly affect AI compliance programs.

The Anthropic-Pentagon standoff is the first major public test of whether AI safety commitments can survive contact with government power. The outcome will define what “responsible AI deployment” means in practice for the decade ahead.

Sources: Anthropic statement (Feb 26, 2026), Bloomberg, CBS News, NBC News, Politico, Axios, Gambit Security

*Related coverage: AI-Powered Hacker Uses Claude and ChatGPT to Steal 150GB of Mexican Government Data | Anthropic’s Proactive Approach to AI Security | *2025 Global AI & Privacy Landscape