Executive Summary

The 2025 social media landscape is defined by a critical shift in digital manipulation: the transition from “legacy” high-volume spam to sophisticated, AI-driven “psychological realism.” An extensive experiment conducted by the NATO Strategic Communications Centre of Excellence reveals that while platform resilience is improving in specific technical areas, the overall ecosystem remains highly vulnerable to low-cost, coordinated inauthentic behavior (CIB).

Key Takeaways:

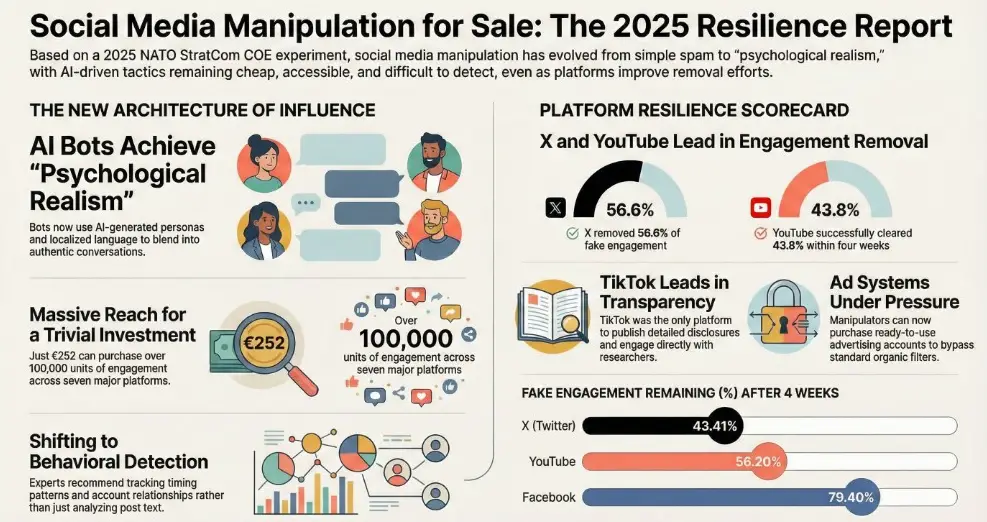

- Behavioral Sophistication: AI bots have moved beyond repetitive “copy-paste” content to “performing authenticity.” They now embed themselves into genuine conversations involving journalists and influencers to earn trust and steer sentiment from within communities.- Platform Resilience Disparity: Enforcement is inconsistent. X and YouTube lead in removing inauthentic engagement, while Facebook and BlueSky are most effective at blocking automated account creation. TikTok leads in transparency but shows low routine removal rates for accounts not specifically escalated for review.- The Economics of Influence: Digital manipulation remains remarkably cheap. A comprehensive cross-platform campaign can be launched for as little as €252, and AI tools allow for fully automated, “closed-loop” content generation and distribution for a trivial investment.- Crypto-Financial Obscurity: Manipulation providers utilize high-risk cryptocurrency exchanges and “hot wallet” commingling to obscure money trails. Only 40% of transactions in the 2025 experiment could be traced end-to-end, facilitating a resilient gray market for influence.- Shifting Narratives: There is a documented move away from purely local political content toward military-related themes, particularly the amplification of pro-China narratives and military strength across multiple platforms.

Download: socialmedia-compressed socialmedia-compressed.pdf1 MB.a{fill:none;stroke:currentColor;stroke-linecap:round;stroke-linejoin:round;stroke-width:1.5px;}download-circle

1. The Architecture of AI-Enabled Sophistication

Modern synthetic influence has evolved from “broadcasting” to “embedding.” Sophisticated bots now utilize “psychological realism” to mimic human identity and social interaction, making them indistinguishable from real users to the casual observer.

Mechanisms of Psychological Realism

- Credible Identity Construction: Bots use AI-generated imagery for professional-looking personas and adopt “localized naming conventions” and regional nuances to pass as local citizens.- Contextual Mimicry: AI allows bots to generate “context-aware comments” that match the tone and topic of a thread. This includes emotional exploitation, where bots mimic sentiment to validate or inflame the feelings of real users.- Relational Dynamics: Unlike legacy bots that interacted in isolated “bot farms,” modern synthetic actors target authentic voices. They focus on engagement with verified profiles, journalists, and influencers, building a facade of credibility through “earned trust.”

Case Study: The Polish Airspace Incident (September 2025)

During a Russian drone incursion into Polish airspace, a network of fake profiles used these advanced techniques to deflect blame from Russia and undermine trust in Polish leadership. By appearing as concerned local citizens using AI visuals and local names, the network performed authenticity to manipulate public perception during a kinetic crisis.

2. Platform Resilience: 2025 Performance Metrics

No single platform is fully resilient; rather, strengths and weaknesses vary across different stages of the manipulation lifecycle.

Detection and Removal Capabilities

Metric

High Resilience

Low Resilience

Removing Fake Engagement

X: 43.41% remaining

YouTube: 56.20% remaining

BlueSky: 100% remaining after 4 weeks

Removing Fake Accounts

VKontakte: 96% removed

X: 82% removed

TikTok: 4% removed

Instagram: 22% removed

Blocking Account Creation

Facebook / BlueSky: Immediate suspension of automated accounts

TikTok / YouTube: Allowed registration without CAPTCHA challenges

Ad Manipulation Resistance

TikTok: 0% delivery of fake comments

Facebook: <1% delivery

Instagram: Delivered 340% of purchased fake comment volume

Transparency and Responsiveness

- TikTok: The leader in transparency, publishing detailed disclosures on covert influence operations and engaging directly with research findings.- Facebook: Showed the greatest improvement in responding to user reports, removing nearly 25% of reported profiles.- X: Experienced a decline in overall score due to a lack of transparency updates and low responsiveness to manual reporting (removing only 2.5% of reported accounts).

3. The Economics of Manipulation

The barrier to entry for conducting large-scale manipulation remains dangerously low due to the affordability of AI and the persistence of commercial service providers.

Cost Breakdown of Influence

- Content Generation: For a budget of €10, a manipulator can generate hundreds of videos and thousands of images using AI tools.- Engagement Baskets: In the 2025 experiment, €252 purchased a comprehensive package across seven platforms, resulting in:17,553 comments- 37,814 likes- 16,025 shares- 27,653 views Ad Campaigns: Paid manipulation is more expensive but accessible. A spend of €130 generated over 206,000 views and 200 likes via ad campaigns launched through fake accounts.X (Twitter) Views: Prices for views on X dropped significantly in 2025, allowing the acquisition of 156,000 fake views for just €10.

Automated Orchestration

Researchers successfully tested a “closed-loop” system where AI tools (via APIs like GPT-4o and Freepik) generated text, created consistent character visuals, and published content across multiple platforms without any human intervention. This proves that large-scale, automated dissemination is technically feasible and highly accessible.

4. The Crypto-Financial Infrastructure

Commercial manipulation providers rely on cryptocurrency to maintain a resilient, low-visibility financial backbone. This infrastructure facilitates cross-border payments while evading traditional financial oversight.

Obfuscation Tactics

- VASP Hot Wallets: Providers route funds through Virtual Asset Service Providers (VASPs) such as Cryptomus and Heleket.- Commingling: Funds from multiple users are mixed within these hot wallets, breaking the “on-chain attribution” and making it nearly impossible to trace individual transactions using public blockchain data.- Traceability Gap: In testing, only 40% of transactions could be reliably traced end-to-end. Once funds enter custodial wallets, internal movements become invisible.

Market Scale and Regulatory Concerns

Analysis of specific provider addresses reveals significant transaction volumes between September 2023 and October 2025:

- RU1 (Russia-based): Received approximately USD 265,261 via 681 transactions.- UK2 (UK-based): Received approximately USD 123,714.

Sanctions Implications: The use of major exchanges like Binance by Russia-based operators (such as RU1) raises concerns regarding Council Regulation (EU) No. 833/2014, which prohibits providing crypto-custody services to Russian nationals or residents.

5. Strategic Recommendations for Detection

The shift in bot behavior necessitates a corresponding shift in detection strategies. Content-based filtering is no longer sufficient to counter AI-generated unique text.

- Prioritize Behavioral Detection: Focus on how accounts act rather than what they post. Key indicators include cross-platform behavioral synchronization and unnatural temporal patterns (e.g., sustained low-volume activity to avoid spam triggers).- Map Relational Dynamics: Analyze “conversation-level influence.” Identify accounts that predominantly target authentic voices and high-visibility threads while showing reduced interaction with other fake accounts.- Follow the Money: Integrate financial forensics into influence analysis. Tracking cryptocurrency flows through high-risk exchanges can identify the service providers funding synthetic networks.- Continuous Monitoring: Move away from viewing manipulation as isolated “campaigns.” Implement longitudinal analysis to track how narratives evolve and where synthetic actors penetrate genuine dialogue spaces.